AI Bias in Recruiting: How to Identify and Avoid It

AI doesn't have opinions. But it can have biases. When an AI screening tool consistently favors certain groups over others — not based on qualifications — that's AI bias. And in recruiting, it can cause real harm.

This isn't a theoretical risk. It has happened. It continues to happen. Understanding how and why is the first step to preventing it.

What Is AI Bias?

AI bias occurs when an algorithm produces systematically unfair outcomes. In recruiting, this means qualified candidates get lower scores — or get filtered out entirely — because of characteristics unrelated to job performance.

These characteristics include gender, age, ethnicity, disability status, name origin, and even zip code. The AI doesn't need to be explicitly programmed to discriminate. It learns patterns from data. If the data contains bias, the AI reproduces it.

Bias in AI recruiting is not always obvious. A tool might never mention gender. But if it penalizes career gaps (which disproportionately affect women) or favors graduates from elite universities (which skew toward higher-income demographics), the effect is discriminatory.

How AI Bias Occurs in Recruiting

There are three primary sources of bias in AI recruiting systems.

1. Training Data Bias

AI learns from historical data. If a company historically hired mostly men for engineering roles, the AI learns that male candidates are "better fits." It's not malicious. It's math applied to skewed data.

The AI optimizes for patterns it sees. If the pattern is biased, the output is biased.

2. Feature Selection Bias

Which data points does the AI consider? If it weighs university prestige heavily, it indirectly discriminates against first-generation students. If it considers "years since graduation," it creates age bias.

Even seemingly neutral features can be proxies for protected characteristics. Zip codes correlate with ethnicity and income. Hobbies can signal gender. Name patterns reveal ethnic background.

3. Measurement Bias

How do you define "good hire"? If success is measured by tenure, you might bias against employees who were promoted out of the role quickly. If success is measured by manager ratings, you inherit the biases of those managers.

The metrics you optimize for shape the bias your AI develops.

Real-World Examples

Amazon's Recruiting AI (2018)

Amazon built an AI recruiting tool trained on 10 years of hiring data. The system learned to penalize CVs containing the word "women's" — as in "women's chess club" or "women's college." It downgraded graduates from two all-women's universities.

Amazon scrapped the project. The lesson: historical hiring data reflects historical biases. Training AI on that data automates discrimination at scale.

Austrian Employment Service (AMS, 2019)

Austria's public employment service developed an algorithm to classify job seekers. Women received systematically lower scores than men with identical qualifications. The system also penalized people with disabilities and non-EU citizens.

The project was paused after public outcry and legal challenges. The algorithm had learned from labor market outcome data — which reflected existing discrimination in the labor market.

Resume Whitening Studies

Multiple studies show that candidates with ethnic-minority-sounding names receive 30-50% fewer callbacks than identical CVs with white-sounding names. While these studies tested human reviewers, the same patterns appear in AI systems trained on human hiring decisions.

A 2024 study by researchers at the University of Washington found that large language models show measurable bias when ranking CVs with names associated with different racial groups — even when explicitly instructed to ignore names.

Age Bias in Job Platforms

In 2023, research revealed that several major job platforms' recommendation algorithms systematically showed fewer senior-level candidates to recruiters searching for "energetic" or "dynamic" team members. The AI associated these terms with younger candidates.

How to Detect AI Bias

Detecting bias requires structured analysis. Here are 4 practical methods.

Demographic Parity Testing

Run a large set of CVs through the system. Compare average scores across demographic groups. If one group consistently scores lower without qualification differences, bias is present.

You need sufficient sample size — at least 100 CVs per group — for meaningful results.

Counterfactual Testing

Take the same CV. Change only the name, gender indicators, or university. Run it through the AI again. If scores change, the system is using protected characteristics (directly or via proxies).

This is the simplest and most revealing test.

Outcome Monitoring

Track hiring outcomes over time. If AI-screened candidate pools are less diverse than your applicant pool, the screening step introduces bias.

Compare: What does the applicant pool look like? What does the shortlist look like? If diversity drops significantly at the AI screening step, investigate.

Third-Party Audits

Independent bias audits by qualified researchers provide the most rigorous assessment. The EU AI Act requires this for high-risk AI systems. Consider annual audits at minimum.

Countermeasures: Reducing AI Bias

Eliminating bias completely is unrealistic. Reducing it to acceptable levels is achievable. Here's how.

1. Diversify Training Data

If your training data overrepresents certain groups, balance it. Use synthetic data augmentation or ensure your dataset reflects the diversity you want in outcomes.

2. Remove Proxy Variables

Identify and exclude features that correlate with protected characteristics without predicting job performance. University name, zip code, and graduation year are common proxies.

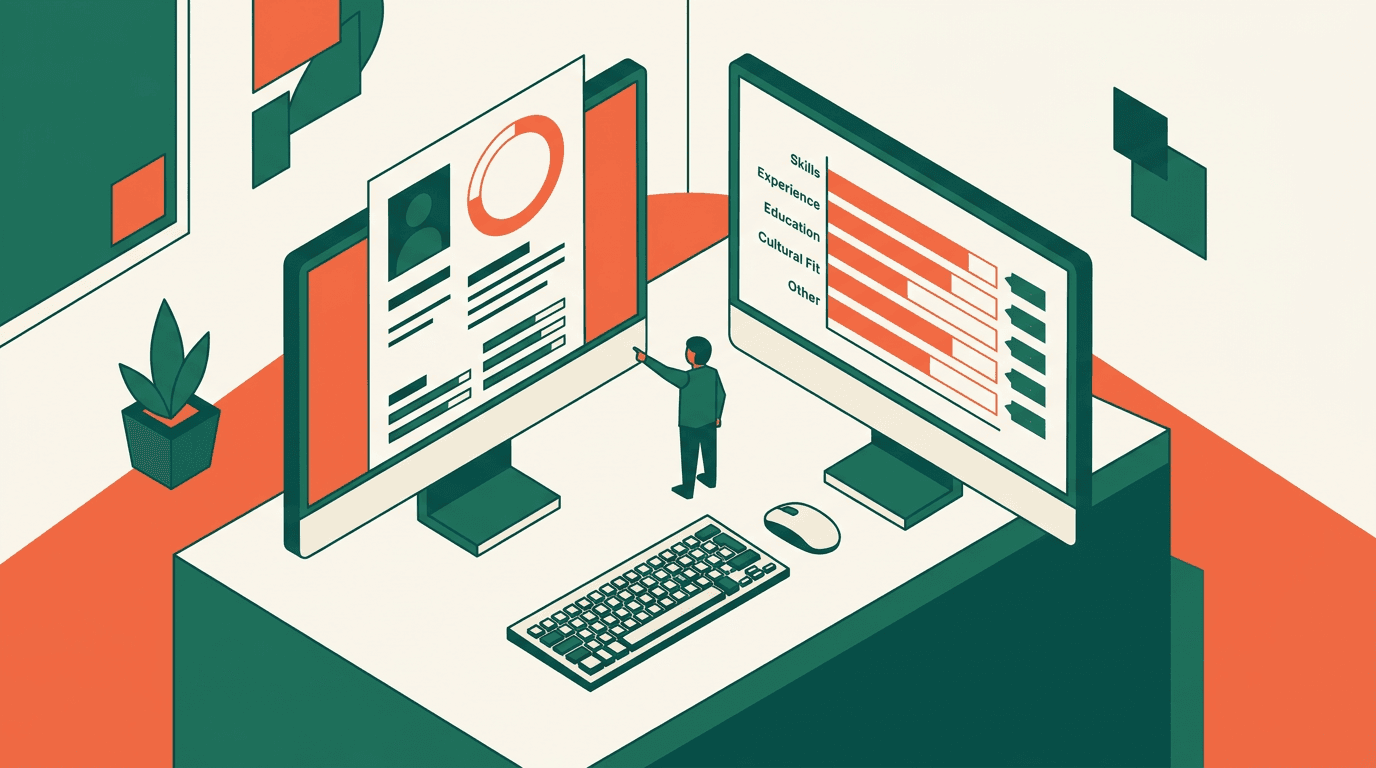

3. Use Criteria-Based Scoring

Define job requirements explicitly. Score against those requirements. "5 years of Python experience" is objective. "Culture fit" is a bias trap.

Weighted, criteria-based scoring creates a clear, auditable connection between qualifications and scores.

4. Implement Human Review

AI should rank, not decide. A human reviewer catches cases where the algorithm missed context. The combination of AI consistency and human judgment produces the best outcomes.

5. Regular Auditing

Bias monitoring isn't a one-time project. Run quarterly reviews of AI screening outcomes. Look for demographic disparities. Adjust when you find them.

6. Transparency

If a candidate asks why they were rejected, you should be able to answer. Tools that explain their scoring make bias visible — and correctable.

What HireSift Does Differently

HireSift addresses AI bias through design choices, not afterthoughts.

Dual scoring system: The CV Match score (holistic AI evaluation) and HireSift Score (weighted criteria) provide two independent assessments. When they disagree significantly, it's a signal to look closer. This built-in cross-check catches patterns that a single score would hide.

Criteria transparency: Every HireSift Score is decomposable. You see exactly which criteria contributed how much. If a criterion produces biased outcomes, you can identify and adjust it.

No black box: Recruiters see the extracted profile alongside the original CV. They can verify what the AI found. They can spot errors. They stay in control.

Skills over proxies: HireSift evaluates qualifications, experience, and skills — not university prestige, zip codes, or name patterns. The criteria you define are the criteria that matter.

The Responsibility Question

AI bias in recruiting is ultimately a human responsibility. The AI doesn't choose to discriminate. People choose what data to train it on, what criteria to use, and whether to audit the results.

Using AI without monitoring for bias is negligent. But avoiding AI entirely isn't the answer either. Manual screening has its own bias problems — and they're harder to detect because there's no audit trail.

The responsible path: use AI screening, monitor for bias systematically, and maintain human oversight at every decision point. That's not just good ethics. Under the EU AI Act, it's the law.

Less screening. More hiring.

HireSift analyzes 100 CVs in minutes — with two transparent scores, EU AI Act compliant, no credit card required.

Less screening. More hiring.

HireSift analyzes 100 CVs in minutes — with two transparent scores, EU AI Act compliant, no credit card required.

Try free for 7 daysRelated Articles

Two Scores Are Better Than One: CV Match vs. HireSift Score Explained

Why HireSift uses two scores and how CV Match and HireSift Score work together for better hiring decisions.

How AI Reads CVs — And Why It Outperforms Manual Screening

Learn how AI-powered CV screening works and why it outperforms manual review in speed, consistency, and fairness.

AI in Recruiting: The Ultimate Guide for HR Teams 2026

Everything about AI in recruiting 2026: how it works, its benefits, and what HR teams need to watch out for.